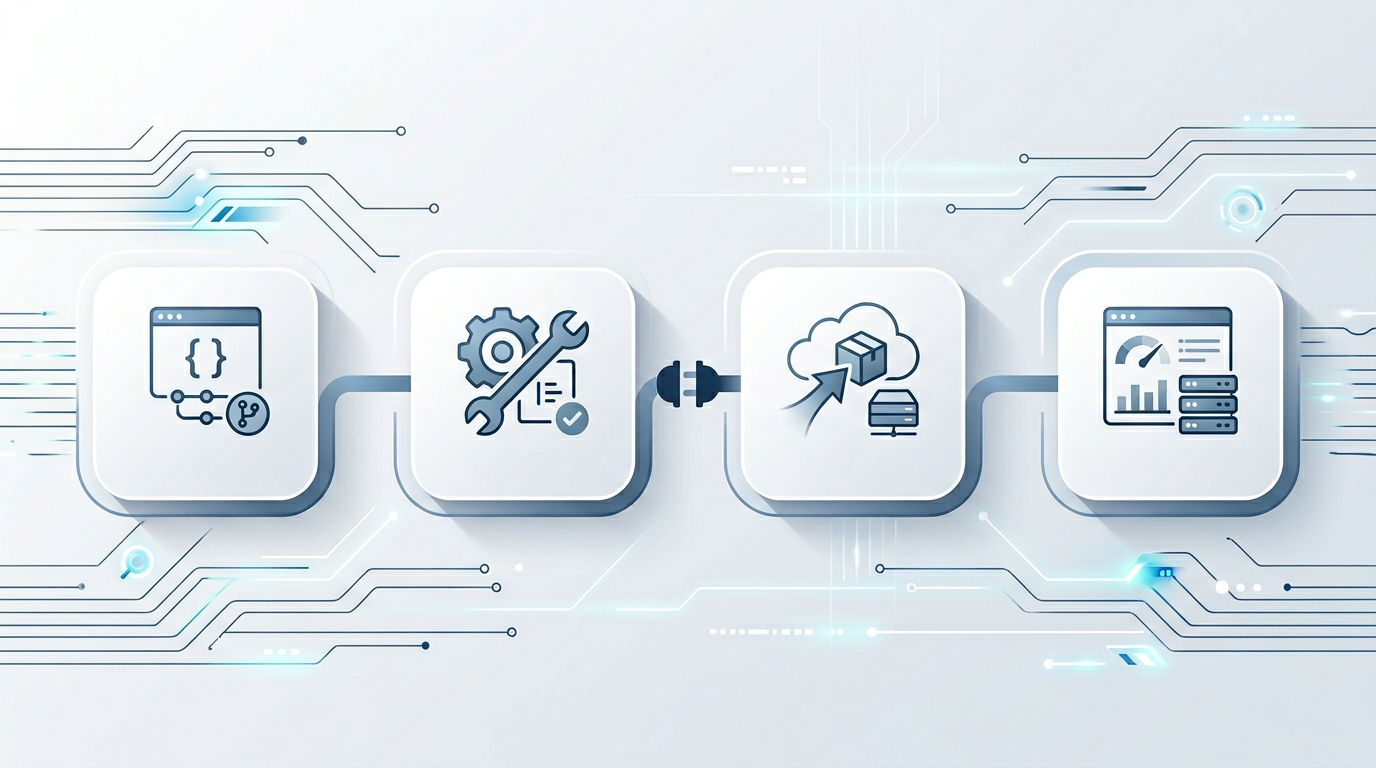

Agentic AI (エージェント型AI) & copilots (業務支援AI)

Production assistants with tool/function calling (ツール/関数呼び出し), structured outputs (構造化出力), and HITL checkpoints (人による確認ポイント)—grounded in your tickets, docs, and CRM (顧客管理) data with offline evals (オフライン評価) and production telemetry (本番テレメトリ) so quality compounds instead of drifting.